13 thoughts on Anthropic, OpenAI and the Department of War

The future is here, whether you're ready or not.

When I went to bed last night1, it appeared that Secretary of War Pete Hegseth (it still feels surreal to type that phrase) had potentially undermined American competitiveness by instructing the federal government not to use Claude and designating the company behind it, Anthropic, as a supply chain risk, a move that could force divestment in Anthropic from Nvidia, Amazon, Google and other companies that contract with the federal government. Was the military going to be stuck using Elon Musk’s Grok, a model that has its uses but is decidedly not on the lead lap and is reportedly considered too unreliable for classified settings?

Nope. Instead, I awoke to news that the Pentagon had reached an agreement with Anthropic rival OpenAI. (And also that we were bombing Iran.) This is at least a little bit more rational, which is not to say that you should feel happy about any of this. The story is complicated and is still developing; Anthropic will take its case to court and the government could TACO out. (For instance, by signing the deal with OpenAI but unbanning Claude.)

Nevertheless, the intersection of AI and politics falls squarely into the Silver Bulletin wheelhouse, something I’m sure we’ll be covering more and more. And I want to get into the habit of writing about these stories while they’re still in progress.2 So here are 13 preliminary thoughts.

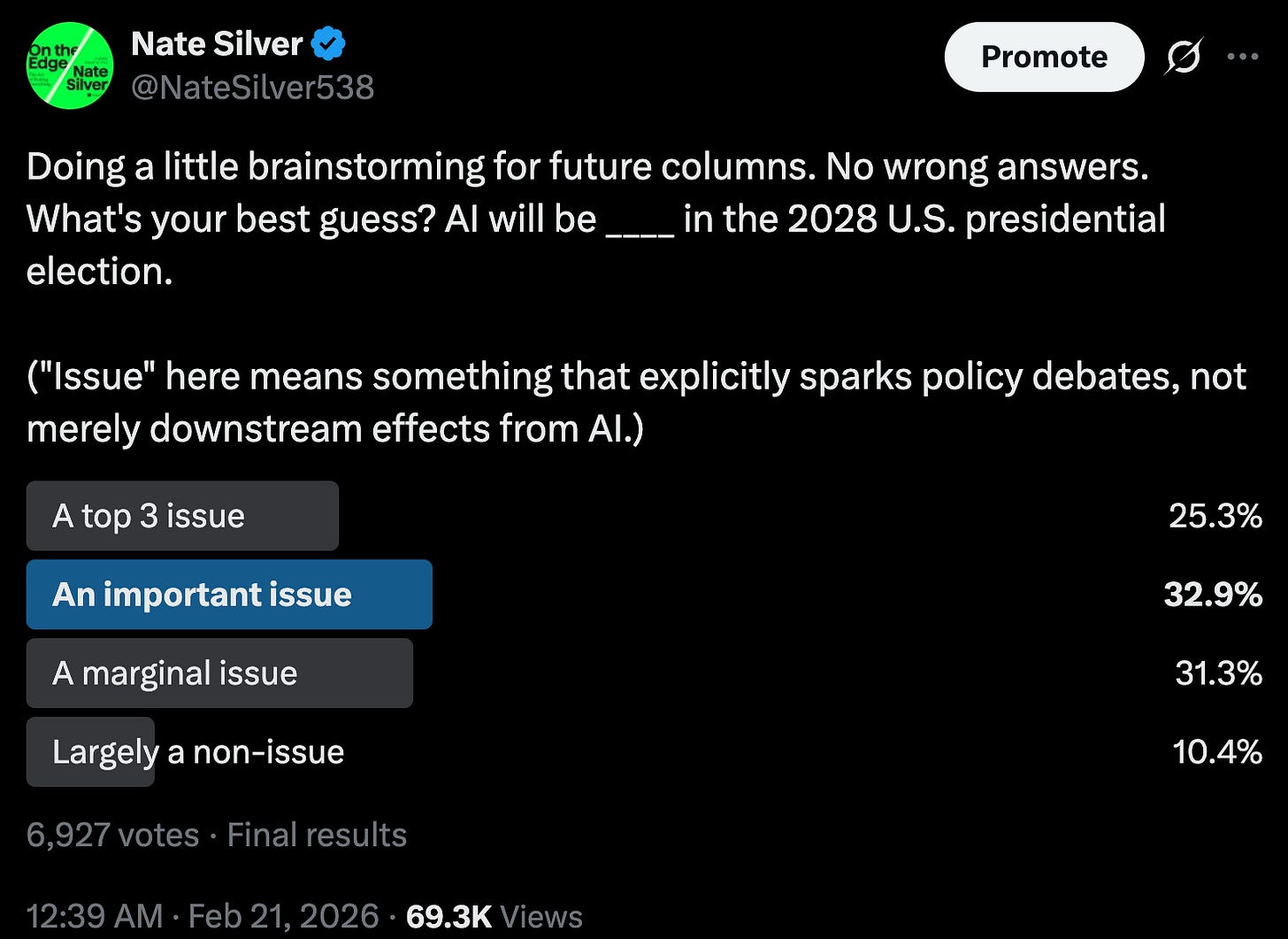

February 2026 is likely to be remembered by historians as the inflection point when we moved into some sort of accelerated timeline on AI-related developments. Not necessarily with respect to the technology itself (although see bullet #3 below); I remain fairly skeptical of the hyper-turbocharged timelines toward superintelligence envisioned by the AI 2027 report, for example. But the point at which AI became a major storyline — maybe the major storyline — in politics and economics, too big even for the skeptics to ignore. The 42 percent of people who said that AI will only be "a marginal issue” or “a non-issue” in the 2028 election in this Twitter poll a week ago are probably going to be wrong.

A related phrase that’s been stuck in my head since last night is “welcome to the big leagues”. Between the government and CEOs like Sam Altman (OpenAI) and Dario Amodei (Anthropic), we’ve now exited the white paper phase and everyone is now making extremely high-stakes, non-hypothetical decisions.

An important predicate to all of this: I don’t think I’m alone in thinking that there’s been some sort of step-change in AI capabilities in the past few months. While I haven’t done any heavy-duty programming since we finished up ELWAY in October, I use LLMs like Claude and ChatGPT more and more every day and I was already using them a lot. (I’m paying for the expensive tiers of both Claude and GPT.) They’re not perfectly reliable by any means, and they still have shortcomings in areas that require specialization. But in my experience, they’ve become much more reliable, much faster, and able to handle much more complicated multi-part queries in recent months after stagnating in mid/late 2025.

I’m also not alone in increasingly considering Claude to be the best model. And I don’t think it’s particularly close, though I still mix in some ChatGPT (~30% of the time) and Gemini (~10%). This is reflected in the valuations at which these companies have been raising capital. Although both are growing very quickly, Anthropic is catching up to OpenAI on a logarithmic scale, or at least it had been prior to this week’s developments.

As Scott Alexander is getting at, you can be sympathetic to Anthropic while also acknowledging that none of the answers are easy. The United States government has an interest in having the best military capabilities, the future of warfare is obviously going to involve AI whether you like it or not, and decisions have to be made quickly without getting Sam or Dario on the phone. In theory, it’s up to the courts and the political system to make sure these decisions are being made lawfully, though whether rapid AI growth is compatible with democracy isn’t so clear (sorry not to have a more optimistic take there).

Anything Altman says about his deal with the government is going to involve a heavy amount of spin, obviously. I’m not going to get into the precise mechanics of exactly which guardrails OpenAI will relax that Anthropic would have insisted on keeping, and that may not be the kind of thing that’s agreed upon in writing anyway. It’s just obviously the case that OpenAI will be materially more permissive or we wouldn’t be in this position in the first place.

Likewise, Trump or Hegseth implying that Anthropic is too “woke” is a red herring, political cover for the base for what are some difficult and potentially unpopular decisions. If you’ve been reading Silver Bulletin for a long time, you’ll know that I’m not sympathetic to wokeness. But with the exception of a hilarious Google misstep two years ago in which Gemini’s heavy-handed system prompts forced the depiction of, for instance, multiracial Nazi soldiers, I don’t find either the models themselves or the AI lab employees I’ve spoken with to be particularly “woke”. Frankly, wokeness is kind of a luxury good, and they have more important things to worry about given how quickly everything is moving.

However, top AI engineers and some of the leadership (Altman less than others) are certainly more philosophically minded than your typical Silicon Valley leaders, often quite concerned with ethics from an effective altruist (EA) or rationalist mindset. If you read On the Edge (which I’ve gotta say seems to be becoming more and more relevant by the day!) you’ll find I have a lot of critiques of EA and rationalism despite sympathizing with them more than the average political commentator does. But they’re definitely not a “normie” way of thinking.3 I don’t think the Trump administration is likely to “get” how people like Amodei think; it’s all going to feel a bit alien to them.4

I have to wonder whether this will lead to a significant exodus of talent from OpenAI. Already, Claude has caught up to or exceeded ChatGPT despite starting out with considerably fewer resources, and that seems like a skill, talent and culture issue which is only going to be reinforced by this, even if it helps OpenAI to raise capital.

I also assume that, among the general population, Anthropic now gets more lib-coded and OpenAI gets more conservative-coded. I’m not sure this is a great position for OpenAI to be in, particularly as Trump gets more unpopular. As Musk has found out with Tesla, it’s probably still the case that most consumers of high-tech products are fairly left/progressive.

If you’re extremely concerned about existential risk from misaligned AI and just want things to pause or slow down … I’m not sure it’s actually so clear how to read all of this. Indeed, this could have something of a chilling effect on AI labs forming partnerships with the government. Also, to the extent Anthropic was in the lead, this is basically a turtle shell thrown at them like in a game of Mario Kart where they’ve now been pulled back to the pack. Anthropic’s projected future valuation is considerably down on the news at Polymarket.

In my notes last night, when it looked like the government had canceled Claude without any alternative, I wrote “very bad to have idiots in charge atm”. Now that the deal with OpenAI has been announced, it looks more like everyone has gotten what they wanted in some game-theoretical sense. The government gets more control, even if it now has to use what’s probably the second-best product. OpenAI and Altman pulled off a power play and increasingly looks like the Facebook/Meta of the AI world, i.e. cutthroat and very bottom-line focused, but that had increasingly been the way things were headed anyway. And Anthropic actually stood up for itself and gets to entrench its brand as the most ethical of the AI labs.

Nonetheless, I’d feel a lot better about this if American government and state capacity were in better shape right now. For that matter, I think Silicon Valley broadly and the AI labs in particular often have naive views about politics and tend toward treating it as a rounding error in calculating p(doom) and other things when it very much isn’t. I admittedly have a dark sense of humor about this stuff, but there have been too many recent moments that feel like deleted scenes from Idiocracy or Dr. Strangelove.

Don’t worry, readers, I haven’t suddenly become an “early bedtime” guy, but I’m in Europe this week.

It’s not as though AI ever really slows down anyway.

And there’s undoubtedly a lot of spectrum-y/neurodiverse personas in AI and EA circles.

And to some extent vice versa.

The two restrictions that kept the US and Anthropic from reaching agreement were "The restrictions: no mass surveillance of American citizens, and no fully autonomous weapons without a human in the loop." (https://www.bloomberg.com/opinion/articles/2026-02-27/anthropic-vs-pentagon-trump-administration-is-hurting-innovation) These are not trivial issues to be brushed aside, which is how I take Nate's 2/28/26 Silver Bulletin to suggest. Surveillance of the US population is a bad thing and should be stopped, slowed or at least retarded. Surveillance in the hands of an administration that does not go to court for arrest warrants is license to throw in jail any person perceived as anti-Trump. Given Nate's description of AI failings, why would we want AI to make military decisions without human intervention?

It is a good thing that Anthropic would not agree to allow its software to surveil Americans or launch weapons without a human's decision. (As to the latter, think Nukes launched without a human deciding to do so.) So, this is not a trivial matter, Sam Altman appears to be moral-less and we should applaud Dario Amodei's decision.

This feels like a turning point for Hegseth and the government too - designating a company a supply chain risk because they won’t do everything the White House wants is the exact opposite of free trade. Trump already decides who wins in the market, and I really don’t think he should also get to just make a company lose.