How our PRISM NBA draft model works

It's a machine learning model ... but with a lot of human curation.

Most draft models try to predict how good each player will be — some number representing future WAR, or a tier classification, or an over/under on career minutes. PRISM doesn’t quite do that. Instead, it asks a different question based on pairwise comparisons: given two prospects, which one will have the better NBA career?

This design choice matters for a few reasons. Gradient boosting regression to a target like WAR has a few limitations, one of them being that the projections tend to compress towards the training set. While pairwise rankings don’t necessarily change accuracy, they preserve the structure of a gradient boosting model while allowing us more interpretability into prospect volatility, as we’ll demonstrate later. They can also reward exceptional prospects that outperform the training set.

More technically, PRISM is a machine learning model: a CatBoost gradient boosted tree classifier. Each training example is the difference between two prospects’ feature vectors. Every prospect in a draft pool is compared head-to-head against every other, producing an N×N matrix of win probabilities. The PRISM score is a player’s average win rate across all of those matchups.

Stage 1: Role prediction

Basketball evaluation is inherently position-dependent. A high post-up rate is expected for a big but unusual for a guard. A 40 percent three-point clip means something different for a point guard than for a center. Before the main ranking model can evaluate prospects, it needs to know what role each one is likely to play.

PRISM’s role predictions assign each prospect a probability distribution across three offensive archetypes (creator, spacer, big) and three defensive archetypes (perimeter, help, anchor). A prospect with 45 percent creator, 35 percent spacer, and 20 percent big is meaningfully different from one at 90/5/5 — the first is a “tweener”, the second fits the archetype. Either way, the classification is potentially useful.

These role probabilities get used in various ways by the model. They enter the ranking model directly as features. They define positional baselines, so that a prospect’s playstyle can be evaluated relative to what’s normal for their predicted role. And they drive a “tweener score”, which measures how cleanly a player maps to a single archetype.

Playstyle versatility is often an indicator of tweener status — prospects who spread their possessions across isolation, spot-up, transition, and self-creation without a dominant mode tend to have less concentration in any one archetype. But tweener-ness isn’t about current production: tweeners and clear-role prospects have nearly identical impact metrics on average in college. Whether that ambiguity helps or hurts depends on the rest of a player’s profile. Among on-ball creators and bigs, clear roles predict stronger NBA development. The league still needs point guards who run offenses and centers who anchor defenses. But among spacers, tweeners can actually develop better — the modern NBA rewards shooters who can do a little of everything without being locked into one mode.

Stage 2: Preseason score

PRISM evaluates each prospect’s current-season college statistics. But for returning players — sophomores, juniors, seniors — there’s an additional signal available: how they performed last year. A player who was already producing at a high level as a sophomore and then improves as a junior is a fundamentally different bet than a junior who’s having a breakout season after two mediocre years.

Stage 3: Pairwise ranking

PRISM then takes the outputs of Stages 1 and 2 alongside dozens of features covering box score production, advanced impact metrics, physical measurements, shot creation data, playtype frequencies, and strength of schedule, and uses them to produce head-to-head comparisons for every pair in the draft pool.

Our training data spans the 2010 through 2021 draft classes, covering the BartTorvik era where comprehensive play-by-play college statistics became available. Rather than detailing every feature, it’s more useful to describe the assumptions behind them.

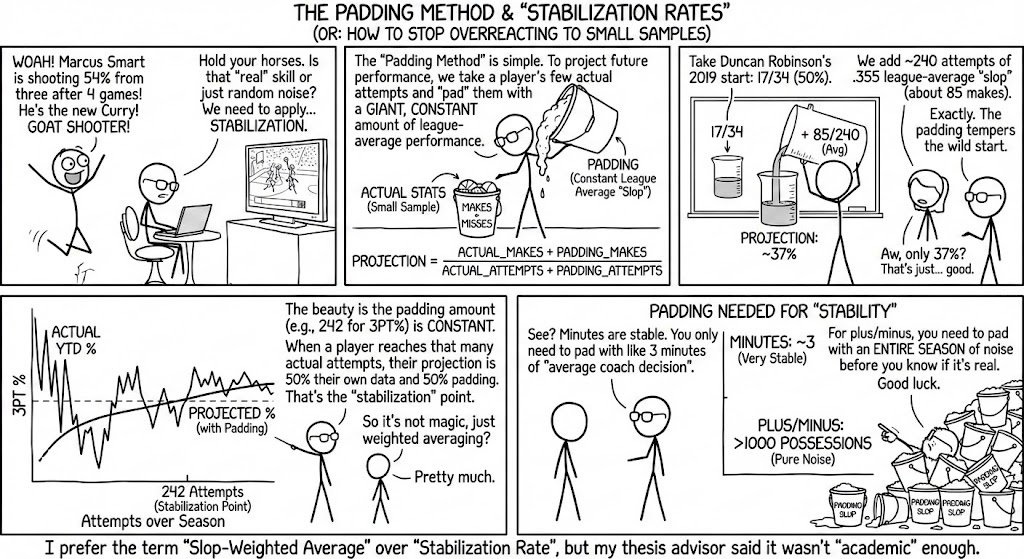

Bayesian padding

College statistics are built on small samples. A prospect who shoots 15-for-40 from three has a 37.5 percent clip, but that’s 40 attempts — roughly the same number an NBA player takes in two weeks. Raw percentages at that volume are dominated by noise. PRISM addresses this through Bayesian padding, which shrinks each player’s shooting and rate statistics toward a prior expectation based on two levels of information. Every player is pulled toward a weighted blend of role-group averages — a prospect who is 60 percent creator and 40 percent spacer gets a blend of both groups' shooting expectations.

For returning players, this role-based prior is further refined by their own prior-season stats: a sophomore who shot 30 percent on 200 attempts last year will have a prior anchored mostly to his own history, while one with only 20 prior attempts will stay closer to the role group. Freshmen, with no individual history, get the role mean directly. How much a player is pulled toward the prior depends on sample size: a player with 200 three-point attempts barely moves, while a player with 30 attempts moves substantially. This padding is applied to our box score and play-by-play stats. Importantly, it happens before any composite features are computed, so downstream metrics like expected points per 100 possessions from each shooting zone are built on stabilized inputs rather than raw noise.

Teammate context and player development

Team context affects development trajectory. PRISM incorporates two features designed to separate genuine talent from circumstantial production. The first is BPM share, which measures a player’s individual BPM relative to his team’s minute-weighted average. A player with a BPM share well above 1.0 is producing far above his teammates — he’s the engine of his team’s performance, and his stats are less likely to be inflated by surrounding talent. A player near or below 1.0 is producing in line with or below his teammates, which doesn’t mean he’s bad, but it means the model should weigh his raw numbers with more skepticism.

The second is BPM trajectory, which tracks whether he’s been improving over the course of his college seasons. The raw slope is then shrunk toward the expected development rate for his class year (sophomore, junior, senior). The reasoning behind this is simple: sophomores are expected to improve more than seniors, so a sophomore’s modest improvement is treated as less noteworthy than the same jump from a senior. Freshmen receive no trajectory value since there’s no prior season to measure against. The feature captures something intuitive: a junior whose BPM has climbed steadily from 2.0 to 5.0 to 8.0 across three seasons is a fundamentally different prospect than one who jumped from 3.0 to 8.0 in a single year, even if their current-season numbers are identical. The first pattern suggests sustained development; the second might be a statistical outlier.

Age does the heavy lifting

Age, along with advanced impact metrics, accounts for a lot of the differentiation between prospects. In combination, these features separate players who are far apart in quality and development. This is by design: these metrics have proven to be predictive of future impact metrics. Age is particularly important as a lever. Players who are younger are assumed to have more physical and skill development left, which would allow them to eclipse their older classmates. But this also depends on how offensively slanted a prospect is. Older offensive prospects are assumed to be closer to their offensive peak than younger players in their class, while that same premium doesn’t necessarily exist on defense. Even older defensive centers, for example, are considered to be valuable prospects, which makes sense, as defensive development tends to be less steep than offensive development early in players’ NBA careers.

Using EPM development curves, we can see that the steepest development actually happens at age 19. Obviously, this carries inherent bias (19 year olds in the NBA are drafted because they can develop that well, and therefore receive more opportunity), but consider that seniors are still behind the curve.

You’ll notice that teams often swing for very high-upside players — this is natural when you consider the NBA’s salary structure, which punishes high-commitment contracts for non-elite production. The difference in development between players who peak at +4.0 EPM and those who peak at +2.0 EPM widens over time, and elite players stay above replacement level far longer, facing a less steep decline than merely “good” players.

There’s an interesting thought experiment that might divide many in the draft community. Say Player A has a 100 percent chance of becoming a “good” player — peaking around +1.85 EPM by year 5. Player B has a 25 percent chance of becoming “elite” and a 75 percent chance of landing at “above average.” Who would you rather have?

On pure expected value, Player A looks better: +1.85 peak EPM versus an expected +0.96 for Player B. But there’s an additional consideration in a league that caps salaries at a certain level. Instead, teams are most likely considering expected surplus.

Both players are cheap for years 1 through 4 on the rookie scale. The decision point comes at year 5, when the max extension kicks in. Player A is good enough to demand a max in restricted free agency but never quite good enough to justify it. This is the Domantas Sabonis contract, the Ja Morant contract — players in the gap between +2.0 and +4.0 who get paid like stars and produce more like solid starters.

Player B profiles differently. In the 25 percent scenario where he becomes elite, you have a franchise cornerstone producing +4.7 EPM on a max deal — massive surplus, and the kind of player championships are built around. But if he’s "merely” above-average, you simply don’t (or at least you shouldn’t) extend him at the max. He’ll walk into free agency or re-sign at a discount, and you’ll lose nothing beyond the draft pick and rookie-scale investment.

This is exactly why teams often optimize for different outcomes in the NBA — combined with aging curves, it’s unlikely that older, creation-driven prospects will warrant the type of investment in the NBA that gives them positive EV on max contracts. Hence, those players tend to fall in the draft.

Of course, this makes selecting the right players at the top even more important. An important but sometimes overlooked part of development curves is how they begin — future “elite” players post +0.01 EPM as rookies, essentially neutral, while the next tier down is already at -0.93. The gap between tiers is visible from year one, even though few rookies are actually good in an absolute sense. The implication isn’t that teams should draft for immediate production — it’s that the signal is there early if you know where to look. PRISM is designed to find it: by anchoring on current college production adjusted for age and context, it can separate which young prospects are tracking toward elite trajectories versus which are merely projectable. The difference between those two outcomes, as the aging curves show, is the difference between a franchise-altering max contract and a roster-clogging one.

Custom features decide close calls

Where we hope PRISM’s edge comes from is a set of custom-engineered features that differentiate similarly-ranked prospects. In head-to-head matchups between players at similar impact levels, secondary features often decide the outcome.

One of the more predictive features, steals, has long been known as a cognition metric, suggesting an understanding of positioning, which becomes valuable for both creator archetypes and big men. That intuition is confirmed when analyzing EPM production: positive relative STL/100 players have steeper developmental increases as players continue deeper into their careers.

Position-adjusted playstyles measure how a player’s shot diet and play-type distribution compare to others in their predicted roles. A big who shoots more than the average big, or a guard who posts up more than the average guard, represents a meaningful deviation from positional norms. These deviations help the model identify stylistic outliers whose production might translate differently than their archetype would suggest.

Self-creation metrics from play-by-play measure how much of a player’s scoring comes off their own creation versus assisted looks. This is one of the clearest distinctions between scalable and role-dependent production. A player who makes 3 unassisted threes per 100 possessions is demonstrating a skill that is valued in the NBA; a player whose threes are almost entirely assisted is more context-dependent.

Still, perhaps too much emphasis is being put on self-creators in draft outcomes. The data bears this out — high self-creation in college doesn't particularly predict stronger NBA development.

Physical measurements are amplifiers, not drivers

Height, wingspan, length (wingspan minus height), and BMI are included in the model, but they function more as amplifiers on top of existing production rather than as standalone predictors. Fifteen years of data tells us that combine measurements and athletic testing are noisy as standalone signals. If you want an athletic guard because athletic guards get to the rim, a guard who already gets to the rim is a good bet, regardless of how he tests at the combine.

Position-adjusted physicals — how tall or long a player is relative to their predicted role — carry more weight than raw measurements. Being 6’9” matters differently depending on whether you’re projected as a guard or a big.

Wingspan is an interesting feature, particularly because its importance differs by position. For perimeter defenders, length (the difference between wingspan and height) matters more. For anchors, pure wingspan matters more. For helpers, neither has particular importance — instead, those players indicate value as defenders with activity, by generating stops, steals and blocks, and having high defensive impact metrics in college. Rebounding also generates positive outcomes and shouldn’t be ignored as a part of defense.

Rolling windows and temporal integrity

Prospects are scored within three-year rolling pools. The 2026 class is pooled with 2024 and 2025; the 2025 class with 2023 and 2024; and so on. This keeps PRISM scores interpretable across years by providing a consistent reference frame. The pooling approach also allows us to get around era effects. Since players aren’t going to be compared against players ten years in the past or future, we aren’t penalizing prospects from previous classes for less “modern” playstyles.

Training pair construction

Not all training comparisons are equally informative. PRISM distinguishes three types of pairs:

Survivor–Survivor: Both players earned meaningful NBA minutes. These are the most valuable comparisons and are included without modification.

Survivor–Bust: One player stuck, the other didn’t. These are downweighted. The reasoning: separating NBA players from non-NBA players is relatively easy and not the model’s primary job. We want capacity allocated to the harder problem of distinguishing between viable prospects.

Bust–Bust: Both players washed out. These comparisons are excluded entirely. The ordering between two players who never stuck in the league — did a guy provide below-replacement-level production for one season or two? — is noisy at best.

Why wins above replacement?

For our player comparisons, we use eWINS — wins created above replacement level according to EPM, setting negative seasons to zero — rather than a player’s rate of production as measured by EPM. This is among the more “controversial” design choices of the model, but we think it’s the right one. eWINS account for playing time and volume, without punishing players for below-replacement-level performance if they’re still learning on the job. The model’s primary job is ranking prospects against each other, and most of the separation between draft outcomes isn’t between good NBA players and slightly better NBA players. It’s between players who make it and players who don’t. Roughly half of all drafted prospects never establish a meaningful NBA career.

EPM can’t cleanly represent this. A player who never reaches the NBA has no EPM. You’re forced to either exclude him from training entirely or invent a penalty value and hope it lands in the right place. But you can calculate his eWINS: 0.

More specifically, we sum eWINS across a player’s first seven NBA seasons, with heavier weight on the later windows where minutes are earned rather than granted by draft hype. This means the target rewards sustained production, not just a single outstanding year. A player who is a solid starter for six seasons accumulates more eWINS than one who has two brilliant years and washes out — and that distinction reflects real draft value.

Scouting-based rankings serve as PRISM’s prior

PRISM has no access to combine athletic testing (vertical leap, agility, sprint times), interview or character intelligence, medical evaluations, or coaching assessments. This is partly a data limitation and partly by design. The model is scoped to statistical production and measurable context instead.

But to compensate for this missing information, we incorporate a prior based on consensus scouting rankings, and this is rated more highly toward the start of the season when statistical metrics of production aren’t reliable either. In early November, a prospect may have played three games. His statistics are essentially noise. But consensus draft boards — aggregated from scouts, media, and market signals — contain real information about a player’s talent level, even if imperfect. As games accumulate, the model’s evaluation gradually takes over, and by roughly early February, the model’s statistical measures dominate.

The mapping from consensus rank to expected PRISM score is not linear. The gap in expected outcome between the consensus #1 and #5 prospect is far larger than the gap between #30 and #35 — the tails are steep. We calibrate this mapping empirically using a non-parametric method that captures the shape of the tails.

We also adjust the prior to account for variation in class quality. The consensus #10 prospect in a historically loaded class represents a meaningfully different talent level than the #10 prospect in a shallow class. If we applied the same anchor to both, we would systematically undervalue prospects in strong classes and overvalue those in weak ones. To address this, we compute draft strength scalars for each class using the pairwise matrices, which compare every prospect against every other prospect in the same rolling pool. The scalar measures how often prospects from a given class beat prospects from adjacent classes in the model’s head-to-head evaluations. Lottery-range and late-class prospects receive separate scalars, since a class can be deep at the top without being strong overall, or vice versa.

PRISM vs. consensus

PRISM isn’t afraid to take big swings. In backtesting, among players who earned NBA minutes, PRISM has a significant edge over consensus rankings in pairwise accuracy. Consensus rankings, which correlate strongly with draft capital and therefore opportunity, are better at “finding” players that stick in the league. But among the players who do stick, consensus is barely better than a coin flip at telling you who will be better.

PRISM’s biggest raises have surfaced real value — typically productive college players whose translatable skills get discounted because they lack the pedigree or physical tools that scouts fixate on. Multiple players that ranked significantly above their consensus position became quality NBA contributors with positive impact metrics.

Volatility scores, trajectories and draft simulator

Using our pairwise probabilities, we can assess how a player's feature profile translates against different tiers of competition. A player's volatility score — their average win probability against the top K prospects vs against the bottom K prospects — captures how consistently they separate from the better players in their draft class. A prospect whose ranking is driven primarily by dominating lower-tier prospects, rather than competing with the top of the pool, may have a lower ceiling but a higher floor.

Additionally, we measure which players peak earlier or later. These features, along with PRISM scores and role projections, give us a good starting point for something fun: a draft simulator. Using roster composition, player labels (franchise player, core, expendable, rotation), and team designations (blank slate, rebuilding with assets, rising, contending, aging contender), we can develop a better sense of which players a team would logically target. Generally, rebuilding teams will draft the best player available, while teams on a contending timeline would prefer prospects who would make an immediate impact and fit within their current roster scheme.

PRISM can’t account for everything

PRISM is best treated as a signal, not a verdict. The model has no access to medical or injury information, interview intelligence, or coaching assessments.

Draft projection is inherently imperfect. NBA decisions and outcomes reflect more than college production alone, even if college production is the strongest indicator.

With the Bayesian padding I think you’re missing something if you pull to group mean rather than expectation given the sample size you have. This is particularly true for three point shooting, a guy who has zero attempts across a full season of minutes certainly wouldn’t be expected to be average

When's the sports forecasting coming back? I'm sadly going to have to end my subscription until they exist again